As it was previously found that ZFS has a good performance in our use case which is even better than the hardware raid performance, new storage servers on our site to be used within GridPP will use ZFS as storage file system in the future.

In this post, I will show how a server for that purpose can easily be setup. The previous posts which also mention details about the used hardware can be found

here,

here, and

here.

The purpose of this storage server is to be used for LHC data storage which is mostly consistent of GB sized files. At the time of using these data files as input for user jobs, typically the whole file is copied over to the local node where the user job runs. That means that the configuration needs to deal with large sequential read and writes, but not with small random block access.

The typical hardware configuration of the storage servers we have is:

- Server with PERC H700 and/or H800 hardware raid controller

- 36 disk slots available

- on some server available through 3 external PowerVault MD-devices (3x12 disks)

- on some servers available through 2 external PowerVault MD-devices (2x12disks) and 12 internal storage disks

- 10Gbps network interface

- Dual-CPU (8 or 12 physical cores on each)

- between 12GB and 64GB of RAM

In this blog post, as I did before, I will describe the ZFS setup based on a machine with 12 internal disks (2TB disks on H700) and 24 external disks (17x8TB + 7x2TB on H800). The machine is already setup with SL6 and has the typical GridPP software (DPM clients, xrootd, httpd,...) installed.

Preparing the disks

Since both raid controllers don't support JBOD, first single raid0 devices have to be created. To find out which disks are available and can be used, omreport can be used:

[root@pool7 ~]# omreport storage pdisk controller=0|grep -E "^ID|Capacity"

ID : 0:0:0

Capacity : 1,862.50 GB (1999844147200 bytes)

ID : 0:0:1

Capacity : 1,862.50 GB (1999844147200 bytes)

ID : 0:0:2

Capacity : 1,862.50 GB (1999844147200 bytes)

ID : 0:0:3

Capacity : 1,862.50 GB (1999844147200 bytes)

ID : 0:0:4

Capacity : 1,862.50 GB (1999844147200 bytes)

ID : 0:0:5

Capacity : 1,862.50 GB (1999844147200 bytes)

ID : 0:0:6

Capacity : 1,862.50 GB (1999844147200 bytes)

ID : 0:0:7

Capacity : 1,862.50 GB (1999844147200 bytes)

ID : 0:0:8

Capacity : 1,862.50 GB (1999844147200 bytes)

ID : 0:0:9

Capacity : 1,862.50 GB (1999844147200 bytes)

ID : 0:0:10

Capacity : 1,862.50 GB (1999844147200 bytes)

ID : 0:0:11

Capacity : 1,862.50 GB (1999844147200 bytes)

ID : 0:0:12

Capacity : 278.88 GB (299439751168 bytes)

ID : 0:0:13

Capacity : 278.88 GB (299439751168 bytes)

The disks 0:0:12 and 0:0:13 are the system disks in a mirrored configuration and shouldn't be touched. The disks 0:0:0 to 0:0:11 can be converted to single raid0 using omconfig:

for i in $(seq 0 11);

do

omconfig storage controller controller=0 action=createvdisk raid=r0 size=max pdisk=0:0:$i;

done

The same procedure has to be repeated for the second controller.

After that, the disks are available to the system and to find out which are the 2TB and which are the 8TB disks, lsblk can be used:

[root@pool7 ~]# lsblk |grep disk

sda 8:0 0 278.9G 0 disk

sdb 8:16 0 1.8T 0 disk

sdc 8:32 0 1.8T 0 disk

sdd 8:48 0 1.8T 0 disk

sde 8:64 0 1.8T 0 disk

sdf 8:80 0 1.8T 0 disk

sdg 8:96 0 1.8T 0 disk

sdh 8:112 0 1.8T 0 disk

sdi 8:128 0 1.8T 0 disk

sdj 8:144 0 1.8T 0 disk

sdk 8:160 0 1.8T 0 disk

sdl 8:176 0 1.8T 0 disk

sdm 8:192 0 1.8T 0 disk

sdn 8:208 0 7.3T 0 disk

sdo 8:224 0 7.3T 0 disk

sdp 8:240 0 7.3T 0 disk

sdq 65:0 0 7.3T 0 disk

sdr 65:16 0 7.3T 0 disk

sds 65:32 0 7.3T 0 disk

sdt 65:48 0 7.3T 0 disk

sdu 65:64 0 7.3T 0 disk

sdv 65:80 0 7.3T 0 disk

sdw 65:96 0 7.3T 0 disk

sdx 65:112 0 7.3T 0 disk

sdy 65:128 0 7.3T 0 disk

sdz 65:144 0 7.3T 0 disk

sdaa 65:160 0 7.3T 0 disk

sdab 65:176 0 1.8T 0 disk

sdac 65:192 0 7.3T 0 disk

sdad 65:208 0 1.8T 0 disk

sdae 65:224 0 1.8T 0 disk

sdaf 65:240 0 7.3T 0 disk

sdag 66:0 0 1.8T 0 disk

sdah 66:16 0 1.8T 0 disk

sdai 66:32 0 7.3T 0 disk

sdaj 66:48 0 1.8T 0 disk

sdak 66:64 0 1.8T 0 disk

/dev/sda is the system disk and shouldn't be touch, but all the other disks can be used for the storage setup.

ZFS installation

The current version of ZFS can be downloaded from the

ZFS on Linux web page. Depending on the used distribution, there are also instructions on how to install ZFS through the package management. In the worst case, one can download the source code and compile on the own system.

Since we use SL which is RH based, we can follow the instructions provided

on the page .

After the installation of ZFS through yum, the module needs to be loaded using modprobe zfs to continue without a reboot.

To have file systems based on zfs automounted at system start, unfortunately also selinux options need to be changed. In the selinux config file, in our case at /etc/sysconfig/selinux, we need to change "SELINUX=enforcing" to at least "SELINUX=permissive"

This will probably be needed as long as zfs is not part of the RH distribution and zfs will not be recognized by selinux as a valid file system. More about this issue can be found

here.

ZFS storage setup

Since we have the ZFS driver installed and the disks prepared now, we can continue to setup the storage pool.

In this example, we create 2 different storage pools - one for the 2TB disks and one for the 8TB disks - as a good compromise between possible IOPS and available space. For ZFS, it doesn't matter if the disks within one storage pool are connected through the same controller or through different ones, like in our case for the 2TB disks. For the configuration, it's decided to use raidz2 which has 2 redundancy disks similar to raid6. Also, one disk of each kind will be used as hot spare.

To do so, we need to find all disks of a given kind in the system and creating a storage pool for these disks using zpool create :

[root@pool7 ~]# lsblk |grep 1.8T

sdb 8:16 0 1.8T 0 disk

sdc 8:32 0 1.8T 0 disk

sdd 8:48 0 1.8T 0 disk

sde 8:64 0 1.8T 0 disk

sdf 8:80 0 1.8T 0 disk

sdg 8:96 0 1.8T 0 disk

sdh 8:112 0 1.8T 0 disk

sdi 8:128 0 1.8T 0 disk

sdj 8:144 0 1.8T 0 disk

sdk 8:160 0 1.8T 0 disk

sdl 8:176 0 1.8T 0 disk

sdm 8:192 0 1.8T 0 disk

sdab 65:176 0 1.8T 0 disk

sdad 65:208 0 1.8T 0 disk

sdae 65:224 0 1.8T 0 disk

sdag 66:0 0 1.8T 0 disk

sdah 66:16 0 1.8T 0 disk

sdaj 66:48 0 1.8T 0 disk

sdak 66:64 0 1.8T 0 disk

[root@pool7 ~]# zpool create -f tank-2TB raidz2 sdb sdc sdd sde sdf sdg sdh sdi sdj sdk sdl sdm sdab sdad sdae sdag sdah sdaj spare sdak

[root@pool7 ~]# lsblk |grep 7.3T

sdn 8:208 0 7.3T 0 disk

sdo 8:224 0 7.3T 0 disk

sdp 8:240 0 7.3T 0 disk

sdq 65:0 0 7.3T 0 disk

sdr 65:16 0 7.3T 0 disk

sds 65:32 0 7.3T 0 disk

sdt 65:48 0 7.3T 0 disk

sdu 65:64 0 7.3T 0 disk

sdv 65:80 0 7.3T 0 disk

sdw 65:96 0 7.3T 0 disk

sdx 65:112 0 7.3T 0 disk

sdy 65:128 0 7.3T 0 disk

sdz 65:144 0 7.3T 0 disk

sdaa 65:160 0 7.3T 0 disk

sdac 65:192 0 7.3T 0 disk

sdaf 65:240 0 7.3T 0 disk

sdai 66:32 0 7.3T 0 disk

[root@pool7 ~]# zpool create -f tank-8TB raidz2 sdn sdo sdp sdq sdr sds sdt sdu sdv sdw sdx sdy sdz sdaa sdac sdaf spare sdai

After the zpool create commands, the storage is setup in a raid configuration, a file system created on top of it, and mounted under /tank-2TB and /tank-8TB. There are no additional commands needed and all is available within seconds.

At this point the system looks like:

[root@pool7 ~]# mount|grep zfs

tank-2TB on /tank-2TB type zfs (rw)

tank-8TB on /tank-8TB type zfs (rw)

[root@pool7 ~]# zpool status

pool: tank-2TB

state: ONLINE

scan: none requested

config:

NAME STATE READ WRITE CKSUM

tank-2TB ONLINE 0 0 0

raidz2-0 ONLINE 0 0 0

sdb ONLINE 0 0 0

sdc ONLINE 0 0 0

sdd ONLINE 0 0 0

sde ONLINE 0 0 0

sdf ONLINE 0 0 0

sdg ONLINE 0 0 0

sdh ONLINE 0 0 0

sdi ONLINE 0 0 0

sdj ONLINE 0 0 0

sdk ONLINE 0 0 0

sdl ONLINE 0 0 0

sdm ONLINE 0 0 0

sdab ONLINE 0 0 0

sdad ONLINE 0 0 0

sdae ONLINE 0 0 0

sdag ONLINE 0 0 0

sdah ONLINE 0 0 0

sdaj ONLINE 0 0 0

spares

sdak AVAIL

errors: No known data errors

pool: tank-8TB

state: ONLINE

scan: none requested

config:

NAME STATE READ WRITE CKSUM

tank-8TB ONLINE 0 0 0

raidz2-0 ONLINE 0 0 0

sdn ONLINE 0 0 0

sdo ONLINE 0 0 0

sdp ONLINE 0 0 0

sdq ONLINE 0 0 0

sdr ONLINE 0 0 0

sds ONLINE 0 0 0

sdt ONLINE 0 0 0

sdu ONLINE 0 0 0

sdv ONLINE 0 0 0

sdw ONLINE 0 0 0

sdx ONLINE 0 0 0

sdy ONLINE 0 0 0

sdz ONLINE 0 0 0

sdaa ONLINE 0 0 0

sdac ONLINE 0 0 0

sdaf ONLINE 0 0 0

spares

sdai AVAIL

errors: No known data errors

[root@pool7 ~]# zpool list

NAME SIZE ALLOC FREE EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

tank-2TB 32.5T 153K 32.5T - 0% 0% 1.00x ONLINE -

tank-8TB 116T 153K 116T - 0% 0% 1.00x ONLINE -

[root@pool7 ~]#

[root@pool7 ~]# zfs list

NAME USED AVAIL REFER MOUNTPOINT

tank-2TB 120K 28.0T 40.0K /tank-2TB

tank-8TB 117K 97.7T 39.1K /tank-8TB

[root@pool7 ~]#

[root@pool7 ~]# df -h|grep tank

tank-2TB 28T 0 28T 0% /tank-2TB

tank-8TB 98T 0 98T 0% /tank-8TB

Setting additional filesystem properties

Since there is lz4 available as compression algorithm which has a very small impact on performance, we can enable compression on our storage. This has probably not a large impact on the storage of LHC data, but could lower the storage space for non-LHC experiments that will be supported in the near future.

In addition, the storage of xattr will also be changed to a similar behaviour like in ext4.

Also since we have a spare configured in our pools, we need to activate auto replacement in failure cases making it a hot spare. An interesting feature of ZFS is also to grow the pool size if the disks are replaced by new disks with a larger capacity. This needs to be done for all disks within one vdev to have an effect, but can be done one by one over time.

[root@pool7 ~]# zfs set compression=lz4 tank-2TB

[root@pool7 ~]# zfs set compression=lz4 tank-8TB

[root@pool7 ~]# zpool set autoreplace=on tank-2TB

[root@pool7 ~]# zpool set autoreplace=on tank-8TB

[root@pool7 ~]# zpool set autoexpand=on tank-2TB

[root@pool7 ~]# zpool set autoexpand=on tank-8TB

[root@pool7 ~]# zfs set relatime=on tank-2TB

[root@pool7 ~]# zfs set relatime=on tank-8TB

[root@pool7 ~]# zfs set xattr=sa tank-2TB

[root@pool7 ~]# zfs set xattr=sa tank-8TB

Changing disk identification

Using the disk identification by letters, like sdb or sdc, is easy to handle and good to setup a pool. However, the order how disks are identified could be changed on a reboot and also will change if the disks need to be rearranged on the server, for example after replacing one of the external MD devices.

While in such cases ZFS should still be able to identify the disks belonging to the same pool and import the pool, it is better to use the disk IDs to identify disks. To change this behaviour, we only need to export the pool and import using the disk IDs:

[root@pool7 ~]# zpool export -a

[root@pool7 ~]# zpool import -d /dev/disk/by-id tank-8TB

[root@pool7 ~]# zpool import -d /dev/disk/by-id tank-2TB

Making the space available to DPM

Traditionally, the available space on a large vdev was divided into smaller parts by creating partitions in fdisk. In ZFS however this can be done directly on top of the just created pool. All properties set for the top level ZFS will be distributed to the new zfs too. There will be no need to set the compression property or other properties again, except if one wants to have different properties than before.

A new file system is created by using zfs create :

[root@pool7 ~]# zfs create -o refreservation=9T tank-8TB/gridstorage01

[root@pool7 ~]# zfs create -o refreservation=9T tank-8TB/gridstorage02

[root@pool7 ~]# zfs create -o refreservation=9T tank-8TB/gridstorage03

[root@pool7 ~]# zfs create -o refreservation=9T tank-8TB/gridstorage04

[root@pool7 ~]# zfs create -o refreservation=9T tank-8TB/gridstorage05

[root@pool7 ~]# zfs create -o refreservation=9T tank-8TB/gridstorage06

[root@pool7 ~]# zfs create -o refreservation=9T tank-8TB/gridstorage07

[root@pool7 ~]# zfs create -o refreservation=9T tank-8TB/gridstorage08

[root@pool7 ~]# zfs create -o refreservation=9T tank-8TB/gridstorage09

[root@pool7 ~]# zfs create -o refreservation=9T tank-8TB/gridstorage10

[root@pool7 ~]# zfs list

NAME USED AVAIL REFER MOUNTPOINT

tank-2TB 144K 28.0T 40.0K /tank-2TB

tank-8TB 90.0T 7.67T 41.7K /tank-8TB

tank-8TB/gridstorage01 9T 16.7T 39.1K /tank-8TB/gridstorage01

tank-8TB/gridstorage02 9T 16.7T 39.1K /tank-8TB/gridstorage02

tank-8TB/gridstorage03 9T 16.7T 39.1K /tank-8TB/gridstorage03

tank-8TB/gridstorage04 9T 16.7T 39.1K /tank-8TB/gridstorage04

tank-8TB/gridstorage05 9T 16.7T 39.1K /tank-8TB/gridstorage05

tank-8TB/gridstorage06 9T 16.7T 39.1K /tank-8TB/gridstorage06

tank-8TB/gridstorage07 9T 16.7T 39.1K /tank-8TB/gridstorage07

tank-8TB/gridstorage08 9T 16.7T 39.1K /tank-8TB/gridstorage08

tank-8TB/gridstorage09 9T 16.7T 39.1K /tank-8TB/gridstorage09

tank-8TB/gridstorage10 9T 16.7T 39.1K /tank-8TB/gridstorage10

[root@pool7 ~]# zfs create -o refreservation=7.66T tank-8TB/gridstorage11

[root@pool7 ~]# zfs list

NAME USED AVAIL REFER MOUNTPOINT

tank-2TB 144K 28.0T 40.0K /tank-2TB

tank-8TB 97.7T 9.91G 41.7K /tank-8TB

tank-8TB/gridstorage01 9T 9.01T 39.1K /tank-8TB/gridstorage01

tank-8TB/gridstorage02 9T 9.01T 39.1K /tank-8TB/gridstorage02

tank-8TB/gridstorage03 9T 9.01T 39.1K /tank-8TB/gridstorage03

tank-8TB/gridstorage04 9T 9.01T 39.1K /tank-8TB/gridstorage04

tank-8TB/gridstorage05 9T 9.01T 39.1K /tank-8TB/gridstorage05

tank-8TB/gridstorage06 9T 9.01T 39.1K /tank-8TB/gridstorage06

tank-8TB/gridstorage07 9T 9.01T 39.1K /tank-8TB/gridstorage07

tank-8TB/gridstorage08 9T 9.01T 39.1K /tank-8TB/gridstorage08

tank-8TB/gridstorage09 9T 9.01T 39.1K /tank-8TB/gridstorage09

tank-8TB/gridstorage10 9T 9.01T 39.1K /tank-8TB/gridstorage10

tank-8TB/gridstorage11 7.66T 7.67T 39.1K /tank-8TB/gridstorage11

Here a new property is set for each file of the new file systems - refreservation - which reserves the specified space for this particular file system, making sure this space is guaranteed. This is different to setting a quota which limits the space only to an upper limit. However, to make sure the specified space is not exceeded in our case, also a quota of the same size should be specified. For the last file system in each pool, a larger amount could be specified which makes sure that all the space that can't be used by the other file systems due to quota limitations, will be used here.

[root@pool7 ~]# zfs set refquota=7T tank-2TB/gridstorage01

[root@pool7 ~]# zfs set refquota=7T tank-2TB/gridstorage02

[root@pool7 ~]# zfs set refquota=7T tank-2TB/gridstorage03

[root@pool7 ~]# zfs set refquota=7T tank-2TB/gridstorage04

[root@pool7 ~]# zfs set refquota=9T tank-8TB/gridstorage01

[root@pool7 ~]# zfs set refquota=9T tank-8TB/gridstorage02

[root@pool7 ~]# zfs set refquota=9T tank-8TB/gridstorage03

[root@pool7 ~]# zfs set refquota=9T tank-8TB/gridstorage04

[root@pool7 ~]# zfs set refquota=9T tank-8TB/gridstorage05

[root@pool7 ~]# zfs set refquota=9T tank-8TB/gridstorage06

[root@pool7 ~]# zfs set refquota=9T tank-8TB/gridstorage07

[root@pool7 ~]# zfs set refquota=9T tank-8TB/gridstorage08

[root@pool7 ~]# zfs set refquota=9T tank-8TB/gridstorage09

[root@pool7 ~]# zfs set refquota=9T tank-8TB/gridstorage10

[root@pool7 ~]# zfs set refquota=9T tank-8TB/gridstorage11

After that, the storage setup is finished and the just created file systems are already mounted and should be made available to the DPM user:

[root@pool7 ~]# chown -R dpmmgr:users /tank-2TB

[root@pool7 ~]# chown -R dpmmgr:users /tank-8TB

That was the last step needed on the storage server and the new file systems can be added to the DPM head node like any other file system before.

ZFS configuration options

To customize the ZFS behaviour, 2 main config files are available - /etc/sysconfig/zfs and /etc/zfs/zed.d/zed.rc. I will not go into detail here about these 2 files, but if you want to setup your own ZFS based storage then have a look here. The options within are mainly self explaining, for example you can specify where to send email for disk problems and under which circumstances.

As final section, I want to mention 2 very useful commands - zpool history and zpool iostat.

With the first command, one can display all commands that were run against a zpool since its creation together with a time stamp. This can be very useful for error analyses and also to repeat a configuration on another server.

[root@pool6 ~]# zpool history tank-2TB

History for 'tank-2TB':

2016-04-05.11:27:43 zpool create -f tank-2TB raidz2 sdd sdf sdg sdi sdj sdl sdm sdz sdaa sdab sdac sdad sdae sdaf sdag sdah sdai sdaj spare sdak

2016-04-05.11:40:32 zfs create -o refreservation=7TB tank-2TB/gridstorage01

2016-04-05.11:40:34 zfs create -o refreservation=7TB tank-2TB/gridstorage02

2016-04-05.11:40:39 zfs create -o refreservation=7TB tank-2TB/gridstorage03

2016-04-05.11:41:57 zfs create -o refreservation=6.97T tank-2TB/gridstorage04

2016-04-05.12:02:12 zpool set autoreplace=on tank-2TB

2016-04-05.12:02:17 zpool set autoexpand=on tank-2TB

2016-04-05.12:38:11 zpool export tank-2TB

2016-04-05.12:38:33 zpool import -d /dev/disk/by-id tank-2TB

2016-04-06.13:41:37 zfs set compression=lz4 tank-2TB

2016-04-07.14:36:37 zfs set relatime=on tank-2TB

2016-04-07.14:36:42 zfs set xattr=sa tank-2TB

2016-04-11.11:28:08 zpool scrub tank-2TB

2016-04-11.14:12:41 zfs set refquota=7T tank-2TB/gridstorage01

2016-04-11.14:12:43 zfs set refquota=7T tank-2TB/gridstorage02

2016-04-11.14:12:48 zfs set refquota=7T tank-2TB/gridstorage03

2016-04-11.14:12:59 zfs set refquota=7T tank-2TB/gridstorage04

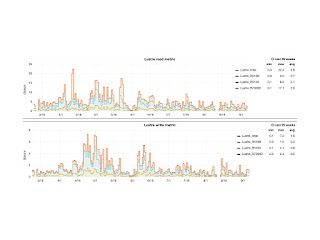

The second command, zpool iostat, displays the current I/O on the pool, separately for read/write operations and for bandwidth. Information can be displayed for a given pool but also for each disk within the pool.

The following example is taken from a server that was configured with 3 raidz2 while it was drained using the dpm-drain command on the head node with threads=1 and one drain per file system, resulting in 5 parallel drain commands running:

[root@pool5 ~]# zpool iostat 1

capacity operations bandwidth

pool alloc free read write read write

---------- ----- ----- ----- ----- ----- -----

tank 6.03T 54.0T 110 24 13.5M 1.33M

tank 6.03T 54.0T 4.74K 0 602M 0

tank 6.03T 54.0T 4.63K 0 589M 0

tank 6.03T 54.0T 4.62K 0 587M 0

tank 6.03T 54.0T 4.42K 0 561M 0

tank 6.03T 54.0T 5.27K 0 669M 0

tank 6.03T 54.0T 4.51K 0 573M 0

tank 6.03T 54.0T 4.47K 0 568M 0

tank 6.03T 54.0T 4.47K 0 568M 0

tank 6.03T 54.0T 4.26K 0 542M 0

tank 6.03T 54.0T 4.56K 0 579M 0

tank 6.03T 54.0T 4.82K 0 613M 0

tank 6.03T 54.0T 4.60K 0 585M 0

tank 6.03T 54.0T 4.73K 0 601M 0

tank 6.03T 54.0T 4.20K 0 533M 0

tank 6.03T 54.0T 4.52K 0 574M 0

tank 6.03T 54.0T 3.72K 0 473M 0

tank 6.03T 54.0T 3.80K 0 484M 0

tank 6.03T 54.0T 4.46K 0 567M 0

tank 6.03T 54.0T 5.16K 0 655M 0

tank 6.03T 54.0T 5.25K 0 667M 0

[root@pool5 ~]# zpool iostat -v 1

capacity operations bandwidth

pool alloc free read write read write

------------------------------------------ ----- ----- ----- ----- ----- -----

tank 5.87T 54.1T 4.77K 0 606M 0

raidz2 1.96T 18.0T 1.60K 0 202M 0

scsi-36a4badb044e936001e55b2111ca79173 - - 329 0 21.2M 0

scsi-36a4badb044e936001e55b2461fc3cb38 - - 354 0 20.8M 0

scsi-36a4badb044e936001e55b25520b22e10 - - 337 0 21.3M 0

scsi-36a4badb044e936001e55b2622171ff0b - - 333 0 21.3M 0

scsi-36a4badb044e936001e55b26e2232640f - - 334 0 21.0M 0

scsi-36a4badb044e936001e55b27d230ce0f6 - - 333 0 21.2M 0

scsi-36a4badb044e936001e55b293245ae11b - - 335 0 21.1M 0

scsi-36a4badb044e936001e55b2b426603fbe - - 335 0 21.3M 0

scsi-36a4badb044e936001e55b2c4274ec795 - - 338 0 20.9M 0

scsi-36a4badb044e936001e55b2d128122551 - - 318 0 20.8M 0

scsi-36a4badb044e936001e55b2f42a2e3006 - - 342 0 21.3M 0

raidz2 1.96T 18.0T 1.59K 0 203M 0

scsi-36a4badb044e936001e830de6afdb1d8f - - 332 0 21.6M 0

scsi-36a4badb044e936001e55b3082b59c1da - - 310 0 21.2M 0

scsi-36a4badb044e936001e55b3142c0ac749 - - 311 0 21.5M 0

scsi-36a4badb044e936001e55b31f2cbeb648 - - 319 0 21.8M 0

scsi-36a4badb044e936001e55b44e3ecc77ea - - 313 0 21.7M 0

scsi-36a4badb044e936001e55b33b2e6172a4 - - 213 0 21.8M 0

scsi-36a4badb044e936001e55b34c2f70184c - - 307 0 21.4M 0

scsi-36a4badb044e936001e55b358301ee6a2 - - 319 0 21.8M 0

scsi-36a4badb044e936001e55b36530e4cb2a - - 331 0 21.8M 0

scsi-36a4badb044e936001e55b3793218970b - - 325 0 21.8M 0

scsi-36a4badb044e936001e55b38532cf8c68 - - 324 0 21.8M 0

raidz2 1.96T 18.0T 1.58K 0 201M 0

scsi-36a4badb044e936001e55b39033741ccf - - 342 0 21.8M 0

scsi-36a4badb044e936001e55b39b3421403b - - 323 0 21.4M 0

scsi-36a4badb044e936001e55b3de3822569a - - 335 0 21.8M 0

scsi-36a4badb044e936001e55b3eb38df509e - - 328 0 21.7M 0

scsi-36a4badb044e936001e55b3f839a79c83 - - 301 0 21.5M 0

scsi-36a4badb044e936001e55b4023a46ae2a - - 325 0 21.7M 0

scsi-36a4badb044e936001e55b40f3b0100cb - - 314 0 21.5M 0

scsi-36a4badb044e936001e55b41d3bdd5a86 - - 335 0 21.5M 0

scsi-36a4badb044e936001e55b42b3cb239cd - - 324 0 21.8M 0

scsi-36a4badb044e936001e55b4363d55d784 - - 322 0 21.7M 0

scsi-36a4badb044e936001e55b4413e09a9f1 - - 331 0 21.8M 0

------------------------------------------ ----- ----- ----- ----- ----- -----

One important point to keep in mind is that all the properties set with zfs or zpool are stored within the file system and not within the OS config files! That means, if the OS gets upgraded then one can do a zpool import and all properties - like mount point, quota, reservations, compression, history - will instantly be available again. There is no need to touch manually any system config file, like /etc/fstab, to make the storage available. This is also true for other properties, like nfs sharing, but since it's not needed in our case I haven't described that. To get an idea what properties are available and what else one can do with ZFS, zfs get all and zpool get all are useful.